More actions

(Making a table...) |

m (boldened and italicized.) |

||

| Line 18: | Line 18: | ||

|+Functionality and Post Setup | |+Functionality and Post Setup | ||

!4 GB VRAM cards or '''LESS''' | !4 GB VRAM cards or '''LESS''' | ||

!You will likely struggle with using w-okada at times below 4 GB (2 GB, 1 GB, etc.) in hearing glitches and a lot of slowness in transmission of your voice - input - and the new voice - output. | !''<u>You will likely struggle with using w-okada at times below 4 GB (2 GB, 1 GB, etc.) in hearing glitches and a lot of slowness in transmission of your voice - input - and the new voice - output.</u>'' | ||

* 1 GB - Likely entirely unusable or extremely slow. Untested. | * 1 GB - Likely entirely unusable or extremely slow. Untested. | ||

* 2 GB - Slow and not useful for real-time computation, may have better usage as a [[Secondary GPU|Secondary/Dual GPU]] (potential guide here) for other applications or usage where such processing is not needed in a real-time scenario. Untested. | * 2 GB - Slow and not useful for real-time computation, may have better usage as a [[Secondary GPU|Secondary/Dual GPU]] (potential guide here) for other applications or usage where such processing is not needed in a real-time scenario. Untested. | ||

Revision as of 15:55, 7 June 2024

Due to the fact that Linux runs 91.5% of supercomputers and is used frequently for professional tasks,[2] Artificial Intelligence (AI) is used widely on Linux for not only proprietary applications and usage, but also is heavily used for FOSS / FLOSS applications of such technologies. Such examples include voice changers, image generators, Large Language Models (LLMs), and much more. If you are feeling frustrated or confused, this is a guide on how to not only yield results on NVIDIA cards - commonly used in the AI industry [CITATION NEEDED] - but also on Advanced Micro Devices' (AMD) ROCm-capable Graphics Processing Units (GPU). Both drivers have the capability to run AI applications, yet also depends on the developers' and project's support for such technologies.

- Keep in mind the philosophy of true freedom on the internet and digitally [LINK NEEDED, perhaps GNU project] - exercise your freedoms as you see fit using this technology at your own whims, but practice good ethics.

RECOMMENDED - anaconda and conda for beginners

...

w-okada's voice changer

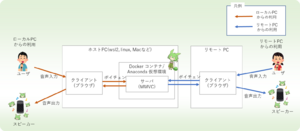

According to w-okada's documentation in English, and as implied by the name of the repository, it is an AI-powered "voice changer" tool.[4] There are numerous uses for this: for simple enjoyment, for music covers, for helping with gender dysphoria, and for anonymity, w-okada as a voice changer is an extremely useful utility.

- Do not use this to catfish. We trust you understand the basic moral complications of such activities, and if you choose to do so, beware. (link to some article on the complications or what happens...)

NVIDIA setup

Setup for NVIDIA cards is particularly straightforward, especially on Arch Linux where drivers are equally as abundant as they are functionally sufficient for this application.

| 4 GB VRAM cards or LESS | You will likely struggle with using w-okada at times below 4 GB (2 GB, 1 GB, etc.) in hearing glitches and a lot of slowness in transmission of your voice - input - and the new voice - output.

|

|---|---|

| ... | ... |

AMDGPU setup

...

Stable Diffusion Setup - WebUI

According to the project's main page, the Stable Diffusion WebUI is a "web interface for Stable Diffusion" in order to generate images using prompts or image-to-image generation.[5]

Large Language Models - oLLaMa, Mistral, etc.

(Someone more experienced can help)

- ↑ https://developer.nvidia.com/blog/nvidia-hopper-architecture-in-depth/

- ↑ Elad, Barry. Linux Statistics 2024 by Market Share, Usage Data, Number Of Users and Facts.[1]

- ↑ https://github.com/w-okada/voice-changer

- ↑ GitHub, voice-changer[2]

- ↑ AUTOMATIC1111, stable-diffusion-webui. Main Page[3]